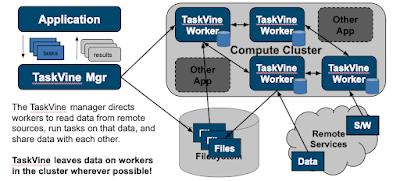

TaskVine is our newest framework for building large scale data intensive dynamic workflows. This is the second in a series of posts giving a brief introduction to the system.

A TaskVine application consists of a manager and multiple workers running in a cluster. The manager is a Python program (or C if you prefer) that defines the files and tasks making up a workflow, and gives them to the TaskVine library to run. Both the files and the tasks are distributed to the workers and put together in order to produce the results. As a general rule, data is left in place on the workers wherever possible, so that it can be consumed by later tasks, rather than bringing it back to the manager.The workflow begins by declaring the input files needed by the workflow. Here, "file" is used generally and means any kind of data asset: a single file on the filesystem, a large directory tree, a tarball downloaded from an external url, or even just a single string passed from the manager. These files are pulled into the worker nodes on the cluster as needed. Then, the manager defines tasks that consume those files. Each task consumes one or more files, and produces one or more files.

A file declaration looks like one of these:

a = FileURL("http://server.edu/path/to/data.tar.gz")

b = FileLocal("/path/to/simulate.exe")

c = FileTemp()

And then a task declaration looks like this:

t = Task("simulate.exe -i input.dat")

t.add_input(a,"simulate.exe")

t.add_input(b,"input.dat")

t.add_output(c,"output.txt")

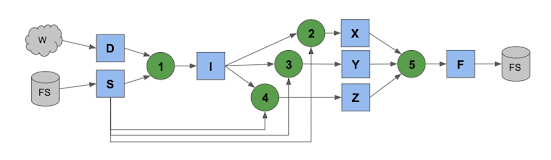

Tasks produce files which get consumed by more tasks until you have a large graph of the entire computation that might look like this:

To keep things clean, each task runs in a sandbox, where files are mapped in as names convenient to that task. As tasks produce outputs, they generally stay on the worker where they were created, until needed elsewhere. Later tasks that consume those outputs simply pull them from other workers as needed. (Or, in the ideal case, run on the same node where the data already is.).

A workflow might run step by step like this:

You can see that popular files will be naturally replicated through the cluster, which is why we say the workflow "grows like a vine" as it runs. When final outputs from the workflow are needed, the manager pulls them back to the head node or sends them to another storage target, as directed.

No comments:

Post a Comment