Andrew Hennessy, a junior at Notre Dame, recently completed a summer REU project in which he analyzed and improved the performance of TopEFT, a high energy physics analysis application built using the Coffea framework and the Work Queue distributed execution system.

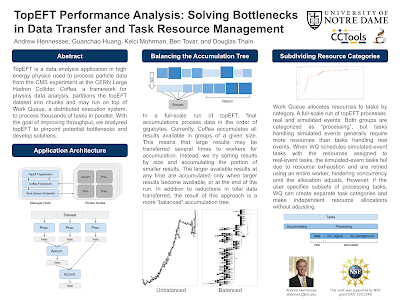

This applications runs effectively in production, but takes about one hour to complete an analysis on O(1000) cores -- we would like to get it down to fifteen minutes or less in order to enable "near real time" analysis capability. Andrew built a visualization of the accumulation portion and observed one problem: the data flow is highly unbalanced, resulting in the same data moving around multiple times. He modified the scheduling of the accumulation step, resulting in a balanced tree with reduced I/O impact.

Next, he observed that processing tasks acting on different datasets have different resource needs: tasks consuming monte carlo (simulated) data take much more time and memory than tasks consuming Production (acquired) data. This results in a slowdown as the system gradually adjusts to the changing task size. The solution here is to ask the user to label the datasets appropriately, and place the Monte Carlo and Production tasks in different "categories" in Work Queue, so that resource prediction will be more accurate.

See the entire poster here:

No comments:

Post a Comment