Prof. Thain gave a talk at HTCondor Week 2022, giving an overview of some of our recent work on resource management in high throughput scientific workflows. An HTCondor talk requires a "bird" metaphor, so I proposed the following question:

How many eggs can you fit in one nest?

A modern cluster is composed of large machines that may have hundreds of cores each, along with memory, disk, and perhaps other co-processors. While it is possible to write a single application to use the entire node, it is more common to pack multiple applications into a single node, so as to maximize the overall throughput of the system.

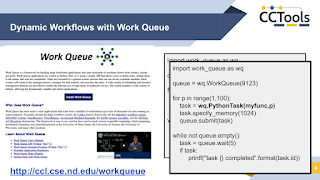

We design and build frameworks like Work Queue that allow end users to construct high throughput workflows consisting of large numbers of tasks:

But, how does the end user (or the system) figure out what resources are needed by each task? The end user might have some guess at the cores and memory needed by a single task, but these values can change dramatically when the parameters of the application are changed. Here is an example of a computational chemistry application that shows highly variable resource consumption:

CCL grad student Thanh Son Phung came up with a technique that dynamically divides the tasks into "small" and "large" allocation buckets, allowing us to automatically allocate memory and pack tasks without any input or assistance from the user:

Here is a different approach that we use in a high energy physics data analysis application, in which a dataset can be split up into tasks of variable size. Instead of taking the tasks as they are, we can resize them dynamically in order to achieve a specific resource consumption:

Ben Tovar, a research software engineer in the CCL, devised a technique for modelling the expected resource consumption of each task, and then dynamically adjusting the task size in order to hit a resource target:

To learn more, read some of our research research papers:

- Ben Tovar, Ben Lyons, Kelci Mohrman, Barry Sly-Delgado, Kevin Lannon, and Douglas Thain, Dynamic Task Shaping for High Throughput Data Analysis Applications in High Energy Physics, IEEE International Parallel and Distributed Processing Symposium, June, 2022.

- Thanh Son Phung, Logan Ward, Kyle Chard, and Douglas Thain, Not All Tasks Are Created Equal: Adaptive Resource Allocation for Heterogeneous Tasks in Dynamic Workflows, WORKS Workshop on Workflows at Supercomputing, November, 2021.

No comments:

Post a Comment